What Is Wrong With This Code? A Real Debugging Workflow

Stuck asking 'what is wrong with this code?' Learn a practical debugging workflow to find and fix bugs faster, from interpreting errors to using AI assistants.

You're probably here because the code compiles, the app boots, the tests looked fine yesterday, and something still feels cursed today.

That's the annoying part about asking “what is wrong with this code”. The answer is rarely “one obvious typo.” More often, it's a bad assumption, a missing condition, an environment mismatch, or a bug hiding behind code that looks perfectly reasonable. The machine is calm. You are not.

Good debugging isn't magic, and it isn't a personality trait some developers are born with. It's a workflow. Once you stop treating bugs like random lightning strikes and start treating them like investigations, things get less dramatic and a lot more fixable.

Beyond the 'It Should Work' Panic

The phrase “but it should work” has ended many productive afternoons.

Most stalled debugging sessions don't fail because the developer is careless. They fail because the developer is now emotionally attached to a theory. You've already decided the bug is in the API call, or the state update, or the database query, so every new clue gets filtered through that belief.

That's not just a vibe problem. Developers often overlook cognitive biases like confirmation bias, and that fixation led to 40% longer debugging times in a 2025 Stack Overflow survey of 90,000 engineers according to .

Your first bug is often your own certainty

A junior developer usually starts with one of two moves:

- Change random lines until the symptom changes.

- Re-read the same function twenty times, hoping guilt will make the bug confess.

Neither works reliably.

A better opening move is to force yourself to answer four plain questions:

- What exactly is failing: Crash, wrong output, stale UI, timeout, bad data, flaky test.

- What did you expect instead: Be specific. “The list should show five items” beats “the page is broken.”

- What changed recently: New dependency, refactor, config change, feature flag, merge conflict.

- What evidence do you already have: Logs, stack trace, screenshots, request payloads, repro steps.

If you can't answer those clearly, you're not debugging yet. You're circling the problem.

Practical rule: If your explanation of the bug includes the word “somehow,” slow down and gather evidence.

Why a second brain helps

An AI assistant can be useful in this context, but only if you use it as a skeptical partner rather than a magical mechanic.

A good workflow is to paste the failing snippet, the exact error, the expected behavior, and the repro steps. Then ask for three things only: likely hypotheses, checks to confirm each hypothesis, and the smallest safe experiment to run next. That keeps you from turning one bug into six fresh ones.

Used that way, an AI tool acts like a rubber duck that talks back. It doesn't replace judgment. It interrupts tunnel vision.

Here's the part people skip. Debugging is not a side quest. It's where you learn how the system behaves instead of how you imagined it behaved. That's frustrating in the moment, but it's also how developers stop feeling helpless around unfamiliar code.

Your First Step Reproduce the Bug Reliably

If you can't reproduce the bug on purpose, you're negotiating with a ghost.

That's why the first real step is always the same. Make the failure happen again. Then make it happen consistently. A mechanic doesn't fix “the weird noise” until they hear the weird noise. You shouldn't patch “the odd issue” until you can trigger it on command.

Build a minimal reproducible example

Most bug reports are too big. Someone pastes a giant file, says “it breaks around here,” and waits for a miracle. That usually gets ignored because nobody wants to reverse-engineer your whole project for free.

A minimal reproducible example is different. It strips the problem down until only the failing behavior remains.

Use this sequence:

- Remove unrelated code: Delete helpers, styling, wrappers, unrelated API calls, extra branches.

- Freeze inputs: Hardcode the values that trigger the issue.

- Keep setup explicit: Include the exact dependency, runtime, and configuration that matter.

- Stop when the bug disappears: The last thing you removed is often close to the cause.

A tiny repro does two useful things. It helps other people help you, and it helps you notice what condition the bug depends on.

Stable bug versus slippery bug

Not all failures behave the same way. Some happen every single run. Others appear only when the moon is full, staging is under load, and someone opened Safari first.

This quick comparison helps:

For flaky or environment-sensitive issues, it helps to document a strict sequence:

- Start from a clean state.

- Use the same input every run.

- Note the runtime, browser, dependency version, or feature flag state.

- Record whether it fails every time or only under certain timing.

A bug you can trigger on demand is already halfway to being fixed.

Teams that need a cleaner test discipline usually benefit from formal checklists. If you want a practical companion piece, this guide on is useful for tightening up the way you capture and verify failures.

What not to do

A few moves waste time almost every time:

- Don't patch before reproducing: You may hide the symptom and miss the cause.

- Don't trust memory: Write down exact steps. “Open page, click twice, wait, then submit” matters.

- Don't change five variables at once: If the bug disappears, you won't know why.

- Don't say “works for me” too early: That often means “I didn't hit the same conditions.”

When someone asks “what is wrong with this code,” the honest answer is often “we don't have a reliable way to make it fail yet.” That sounds slow, but it's faster than guessing.

How to Isolate the Problem and Read the Clues

Once the bug is reproducible, the job changes. You're no longer hunting in the dark. You're reading evidence.

Most bugs leave traces. Sometimes it's a stack trace. Sometimes it's a weird payload. Sometimes it's the awful moment when the app returns a result that is technically valid and completely wrong.

Read the error before you rewrite the function

Developers love skipping the message and sprinting to the code. Slow down.

A stack trace usually tells you three things: where execution failed, what call path led there, and what assumption broke. Start at the top for the error type and message, then walk down through the frames until you hit your code. That's the point where your system handed bad state to the next layer.

For runtime debugging, I still use strategic print(), console.log(), and temporary assertions more than people admit in polite company. The trick is to log questions, not feelings.

Bad log:

- “got here”

Better log:

- current input value

- branch taken

- state before mutation

- return value after transformation

That style reveals where reality diverges from your mental model.

Silent bugs are nastier than loud ones

Crashes are rude, but at least they're honest. Silent bugs smile at you and ship nonsense.

A famous case with the Pima Indians Diabetes dataset showed a patient with “148 pregnancies” because an AI-generated script misinterpreted the index row, shifted glucose values into the wrong column, and skewed the whole analysis, as described in .

That example matters because the code can still run. No exception. No red screen. Just garbage in a nice table.

If the output is surprising, inspect the input before you inspect the algorithm.

When debugging data-heavy code, check a few raw rows manually. Validate field names, types, ordering, and obvious edge values. If a column says “pregnancies” and you see 148, your bug may be in loading or mapping, not in the regression, chart, or UI.

Trace the system, not just the line

A useful way to isolate code paths is to sketch the flow from input to output. Request comes in, parser transforms it, validator checks it, service mutates it, renderer displays it. Once you can see the path, you can put probes in the right spots instead of everywhere.

If you like visual reasoning, converting code paths into diagrams helps more than people expect. This walkthrough on creating a is handy when the logic is too tangled to hold in your head.

A short video can also help reset your debugging habits before you start changing things:

A note on flaky tests

End-to-end failures often mix real bugs with timing noise, selector drift, or environment lag. If your “broken code” only appears in Playwright or Cypress, it's worth reading a focused guide on how to before rewriting the feature itself.

The larger lesson is simple. Don't treat every failure as a logic problem. Sometimes the code is fine and the data is wrong. Sometimes the data is fine and the environment is lying. Sometimes the error message is the clue you kept stepping over.

Upgrade Your Toolkit for Finding the Culprit

Print debugging works. It also gets clumsy fast.

There's a point where adding one more console.log() feels like using a teaspoon to drain a basement. That's when better tools save both time and dignity.

Compare the main debugging tools

Each tool answers a different question:

The mistake is treating them like substitutes. They're complementary.

Interactive debuggers beat guesswork

A real debugger changes the game because it lets you pause execution and inspect the program while it's alive.

Set a breakpoint before the suspicious branch. Step into the function. Watch the value change. Check what was passed in versus what you assumed was passed in. This sounds basic, but it kills a surprising number of bugs that survive long sessions of staring.

I usually reach for an interactive debugger when:

- State changes across several layers: UI event to hook to service to API.

- Async behavior is involved: Promises, callbacks, task queues, retries.

- The bug appears after mutation: Something was valid, then stopped being valid.

- A branch shouldn't be possible: Yet somehow execution keeps taking it.

Static analysis catches trouble before runtime

Linters and static analysis tools are the picky senior reviewer who never gets tired. ESLint, Pylint, SonarQube, PMD, and similar tools won't replace debugging, but they reduce the number of bugs you have to debug in the first place.

This becomes more important as functions grow more tangled. Code with a cyclomatic complexity score over 10 correlates to 4x higher bug rates, and teams that enforce a limit below 10 see 25% fewer defects and 20% faster developer onboarding, according to .

That's not an argument for worshipping one metric. It's a reminder that complicated control flow creates more places for bad assumptions to hide.

Complex code doesn't just take longer to read. It creates more wrong paths for the program to take.

If a function has nested conditionals, early returns, retries, fallback branches, and “temporary” flags from last quarter, split it. A smaller function is easier to test, easier to inspect in a debugger, and much easier to explain to another human.

Use version control like a forensic tool

When a bug appeared “recently,” git bisect is often faster than intuition.

The workflow is gloriously mechanical. Mark one commit as good, one as bad, let Git jump through the history, and run the same test or repro each time. You don't need a perfect theory. You need a reliable pass/fail check.

That method is especially good for:

- regressions after refactors

- bugs introduced across several merged branches

- cases where the diff is too large to eyeball confidently

One workspace helps when the clues are scattered

When the issue spans code, docs, and environment notes, switching between five tools slows people down. In practice, many teams combine a local debugger, a linter, version control, and an AI assistant that can explain code, inspect snippets, and help reason through failures. One option is , which is relevant if you want code explanations, debugging help, and live previews in the same workspace rather than juggling separate tabs.

The trade-off is straightforward. More tooling doesn't make you smarter by itself. It just makes evidence easier to see. You still need the discipline to ask good questions and verify the answers.

Common Traps and Language-Specific Pitfalls

Some bugs are original. Most are sequels.

You'll save a lot of time once you recognize the recurring species of failure. Not every “what is wrong with this code” moment is unique. Sometimes it's the same old trap wearing a fresh stack trace.

The classics that still bite

A few examples show up across languages:

- Off-by-one errors: Loop bounds, array indexing, pagination windows.

- Floating-point surprises: Arithmetic that looks neat in theory and messy in memory.

- Null or undefined assumptions: You “know” the value exists until production politely disagrees.

- Mutation side effects: A helper changed shared state and now three unrelated features act haunted.

- Async timing issues: The data wasn't ready when the code expected it to be ready.

These bugs are common because they target assumptions developers stop actively checking.

The local machine is not production

The most expensive sentence in software might be “works on my machine.”

Code that works locally but fails in production due to invisible environment differences is a top complaint in 60% of developer help threads, and a 2026 report noted a 25% drop in uptime for some apps due to these inconsistencies, according to .

That doesn't mean every production bug is mysterious. It usually means the environments differ in ways the developer didn't account for. Runtime version. Missing environment variable. Different build behavior. Different filesystem assumptions. Different browser behavior. Same code, different world.

A practical checklist helps:

Don't ignore tooling around the edges

A lot of “application bugs” are really setup bugs. A sloppy merge can leave stale logic, duplicate conditions, or broken conflict resolution markers hidden in places nobody revisited carefully. This guide on is worth keeping nearby because messy merges create bugs that look far more sophisticated than they really are.

For lower-level systems, the debugging surface changes but the principle doesn't. If you're working near hardware boundaries, timing, registers, or firmware behavior, resources like these are useful because normal app-level habits won't expose the underlying failure.

The bug is rarely “in production.” The bug is in the assumptions that only production exposed.

The trick is to stop asking whether the code is correct in your environment and start asking whether the whole system is consistent across environments.

From Frustration to Fix A Developer's Mindset

A bug feels personal when you're stuck on it long enough. It isn't.

The useful mindset is procedural. Reproduce the bug. Isolate the failing path. Read the clues. Change one thing. Verify the fix. Then write down what the bug taught you so you don't pay for the same lesson twice.

What strong debuggers do differently

They don't try to look clever. They reduce uncertainty.

That usually means they:

- Preserve evidence: exact error text, inputs, logs, screenshots, failing state

- Test assumptions early: especially around data shape, timing, and environment

- Fix the cause, not just the symptom: no “works for now” patch unless it's clearly temporary

- Add protection afterward: a regression test, a guard clause, a lint rule, a code review note

Code review matters here more than people think. Expert code review methodology that includes tracing all entry points and assuming malformed input has been shown to cut security bugs by 50% before deployment, according to .

That's the part many developers discover late. Debugging isn't only about fixing today's broken branch. It's also about improving the team's future instincts.

Every nasty bug upgrades your judgment

The developers who seem calm around broken systems usually earned that calm the hard way. They've seen bad data look valid. They've watched a flaky test waste half a day. They've learned not to trust “obvious” causes without evidence.

That's why problem-solving matters more than any single framework or editor feature. If you want to strengthen that habit deliberately, this piece on is a useful follow-on.

Debugging still won't become fun every day. Some bugs are absurd. Some are tiny and humiliating. Some turn out to be one missing character and a lesson in humility. Fine. That's part of the job.

A developer who can stay systematic while the code misbehaves is dangerous in the best way. That developer ships fixes without panic, explains the root cause clearly, and gets harder to surprise over time.

When you're stuck, a good next step isn't more guessing. It's better evidence and a tighter workflow. can help with that by giving you one place to inspect code, get debugging suggestions, explain unfamiliar logic, and research tricky environment-specific issues without bouncing between separate tools.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

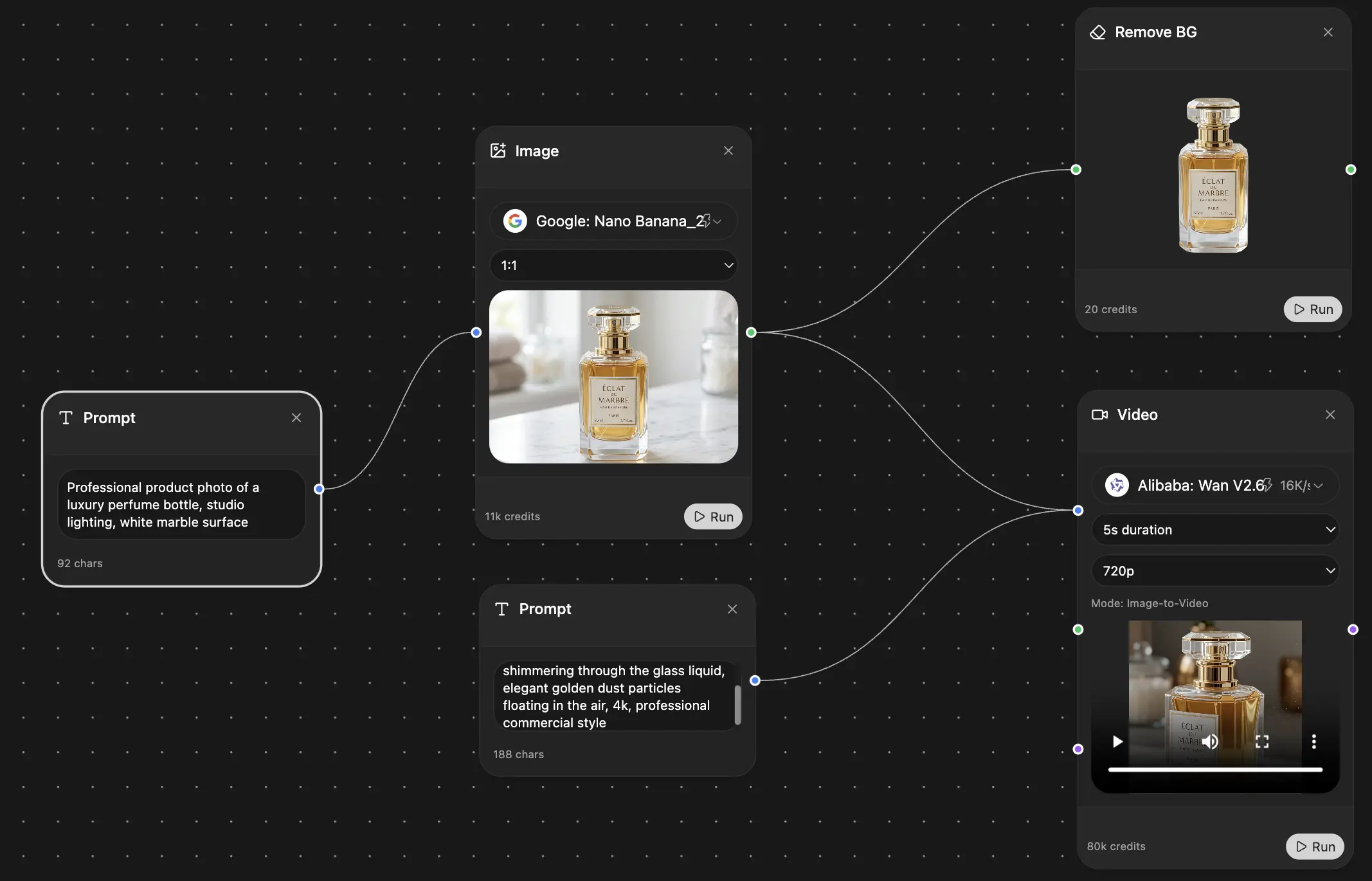

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691