Build an Unbeatable Connect 4 Bot With AI

Build a powerful Connect 4 bot from scratch. This guide covers minimax, alpha-beta pruning, and using AI tools like Zemith to code, test, and deploy your bot.

The odds are good you opened this because you had one of two thoughts.

First thought: “Connect 4 is simple. I can build a bot for that in a weekend.”

Second thought: “Why is my ‘simple’ bot playing like it learned strategy from a potato?”

Both thoughts are normal.

A good connect 4 bot sits in a sweet spot for developers. The game is small enough to finish as a side project, but rich enough to teach the stuff that matters in AI work: state representation, search, heuristics, pruning, model evaluation, and deployment. It also gives you fast feedback. If the bot makes a terrible move, the board exposes it immediately. No hiding behind vague demos.

That’s why I like this project for junior developers. You can start with plain game logic on Friday night, add minimax on Saturday, make it fast on Sunday, and if you still have energy left, wrap it in an API so your friends can rage-quit against it by dinner.

Your Weekend Project Building a Connect 4 Bot

If you played Connect 4 as a kid, you probably remember the sound before you remember the strategy. That little plastic disc drop. The smug grin when you set up a diagonal. The crushing moment when you missed the obvious block and got roasted by a cousin who still reminds you about it.

That nostalgia makes this project fun. The game theory makes it worth building.

A connect 4 bot is one of those rare projects that feels like a toy but teaches real engineering habits. You need a clean board model. You need legal move generation. You need a way to score positions. Then you need to decide whether you’re building a classic search bot, a learning-based bot, or a hybrid that borrows from both.

Why this project punches above its weight

You can keep the scope under control while still touching serious topics:

- Game state modeling: Boards, turns, move validity, terminal states.

- Decision making: Minimax, alpha-beta pruning, heuristics.

- Experimentation: Different evaluation functions produce noticeably different behavior.

- Real-world engineering: APIs, deployment, latency, debugging.

If you’ve also been curious about agent-style workflows, the practical thinking overlaps with . The same habits apply: define the environment, specify allowed actions, decide how decisions are made, then expose the system in a usable interface.

A sane weekend plan

A good flow looks like this:

- Friday night: Build the game engine.

- Saturday morning: Add win detection and move validation.

- Saturday afternoon: Implement minimax badly, then fix it.

- Sunday: Add pruning, improve heuristics, and ship a playable version.

If you want a quick primer on structuring bots before you code, this walkthrough on creating one is useful:

A weekend project works when the loop is short. Write code, play three games, watch the bot do something dumb, fix one thing, repeat.

By the end, you’ll have more than a demo. You’ll have a small AI system that makes decisions under constraints, which is a much better portfolio story than “I made a button do a fetch call.”

Laying the Foundation The Smart Way

Don’t rush to the AI part before the game engine is reliable.

A Connect 4 bot only looks smart if the board logic is boring and correct. If move validation is wrong, if gravity fails in one edge case, or if diagonal wins are missed, every search result built on top of that is garbage. I’ve seen developers blame minimax for problems caused by a broken drop_piece() function.

That is why I start with the engine, then add decision-making. If you are using an all-in-one AI platform like Zemith, use it here first. Ask it to sketch test cases, compare board representations, and generate a clean first pass for your rules engine. Then review every line and play through edge cases manually. AI speeds up the draft. It does not replace the part where you prove the game works.

Pick a board representation that matches the project

A 2D array is the right default for a weekend build.

It is easy to print, easy to inspect in a debugger, and easy to reason about while you are still fixing legal moves and win detection. If your goal is to get a playable bot working by Sunday, this choice keeps the feedback loop short.

A bitboard is faster and more compact. It also raises the difficulty. Bitwise operations can make move generation and win checks much faster, but they also make early debugging harder. I would only start there if performance is part of the goal from day one, or if you already know the representation well.

Here’s the trade-off in plain terms:

Build the rules engine before the bot

Write these five functions first:

create_board()creates the empty state.is_valid_move(col)checks whether a column can accept another piece.drop_piece(col, player)places the piece in the lowest open row.check_winner(player)detects horizontal, vertical, and diagonal wins.get_valid_moves()returns the columns the current player can use.

That list is required because search code assumes the game state is trustworthy. If any one of these functions is flaky, your bot will evaluate impossible positions and your debugging session will head in the wrong direction.

Win detection deserves more tests than you think

Horizontal and vertical checks are usually simple. Diagonals cause the most trouble.

The bug pattern is familiar. A loop starts one row too late, ends one column too early, or scans the board with row and column indexes flipped. The result is a bot that misses a clear win or claims a win that does not exist.

Use simple scanning rules:

- Horizontal: check every four-cell window across each row.

- Vertical: check every four-cell window down each column.

- Positive diagonal: check sequences that move down-right.

- Negative diagonal: check sequences that move up-right.

Test the center of the board. Test the left and right edges. Test positions where three in a row looks close to four but is blocked. Zemith is useful here too. It can generate unit test cases quickly, but you still need to verify that the expected outcomes are correct.

If the bot misses an obvious win, inspect the rules engine before you inspect the search code.

Model state cleanly so search code stays simple

Your bot works on a sequence of game states, not isolated board snapshots. Each state should answer a small set of questions without making the search layer guess:

- Whose turn is it?

- Which moves are legal?

- Has either player won?

- Is the board full?

- Is the state internally valid?

Put that logic behind a GameState or Board class instead of scattering it across helpers. The payoff is practical. Your minimax code can ask for legal moves and terminal states directly, without re-implementing game rules in a second place. If you want a cleaner structure for that class design, this guide to is a useful reference.

Keep the engine plain

You do not need clever abstractions yet. You need code that is easy to trust.

Use plain data structures. Print the board often. Add tests for legal moves, full columns, early wins, late wins, and draws. Let Zemith help with scaffolding, refactors, and test generation, but keep the final design readable enough that you can explain it to another developer in five minutes.

A stable engine saves hours later. Once that foundation is solid, every improvement to the bot’s decision-making means something.

The Brains of Your Bot Classical Search Algorithms

This is the point where your connect 4 bot stops being a rules engine and starts being annoying in the best possible way.

A basic bot can choose a random legal move. That is useful for smoke testing. It is also a fantastic way to lose to anyone over the age of seven. To play well, the bot needs search.

Minimax gives your bot a personality disorder on purpose

Minimax works by assuming two things at the same time:

- your bot will always choose the best move for itself

- the opponent will always choose the best counter-move

That second assumption is why minimax feels paranoid. It plans as if the other player is sharp and slightly mean. Which is exactly what you want.

At each turn, the algorithm explores possible future boards up to a chosen depth. It alternates between maximizing the bot’s score and minimizing it on the opponent’s turn.

A stripped-down version of the logic looks like this:

- Generate legal moves.

- Apply one move.

- Recurse into the new board.

- If depth is exhausted or the game is over, score the board.

- Bubble scores back up and choose the best move.

Alpha-beta pruning is the reason minimax is usable

Plain minimax gets slow fast. Really fast.

The practical fix is alpha-beta pruning. It keeps track of the best score the maximizing player can guarantee and the best score the minimizing player can guarantee. Once a branch becomes obviously worse than something you already found, you stop exploring it.

That means your bot skips work without changing the final answer.

The implementation detail matters here because the performance gain is what makes the algorithm feel real instead of academic. In one documented Python implementation, a well-optimized minimax bot with alpha-beta pruning and bitboards can support 12,000-13,000 Monte Carlo Tree Search iterations in 5 seconds, and its heuristic scoring included values like +5 for a three-in-a-row with an empty space and -4 for blocking an opponent threat, as described in this .

The heuristic is your bot’s street smarts

Minimax is only as good as the board evaluation it uses when it cannot search to the end.

That evaluation function is the difference between:

- a bot that sets traps

- a bot that defends properly

- a bot that proudly walks into disaster

A practical heuristic usually scores short patterns inside groups of four cells, often called windows.

Good signals include:

- Immediate wins: Highest possible score.

- Three plus one empty: Strong positive score.

- Two plus two empty: Small positive score.

- Opponent threats: Negative score, often weighted heavily enough to force blocks.

If your heuristic undervalues defense, the bot plays like an overconfident intern. If it overvalues blocking, it becomes passive and misses winning chances.

Weight immediate wins and immediate losses heavily. If your evaluation treats a forced win as “nice to have,” the search will make comedy choices.

Move ordering matters more than most first versions expect

Alpha-beta pruning gets better when you search promising moves first.

In Connect 4, center columns are often stronger because they participate in more horizontal and diagonal patterns. So if your move generator evaluates center-first instead of left-to-right, pruning usually improves.

That means two bots with the same minimax depth can feel very different in runtime because one explores smart candidates earlier.

A practical comparison

Here’s the blunt version.

Minimax with alpha-beta pruning is the best starting point for most developers building a connect 4 bot. It’s understandable, effective, and easy to improve incrementally.

Good implementation habits save hours

A few practical habits make a huge difference:

- Clone state carefully: Bugs from accidental board mutation are brutal.

- Separate game logic from search: Keep your board code independent from minimax.

- Log chosen moves during testing: You want to know why the bot picked column 4.

- Test known tactical positions: Forced blocks and immediate wins expose weak heuristics quickly.

If you want help turning a flowchart into actual implementation steps, this guide is handy:

What usually does not work

Three things derail early versions constantly.

First, shallow search with a lazy heuristic. The bot looks smart for two turns, then gifts the game away.

Second, slow board handling. If every move requires heavy copying and awkward scanning, your depth ceiling stays low.

Third, mixing “engine code” and “AI code” in one giant file. That makes every bug feel supernatural.

Keep the logic modular. Build the boring parts cleanly. Then let minimax be the part that sweats.

Leveling Up With Machine Learning and Advanced Tactics

You built a search-based bot. It blocks obvious threats, finds short tactical wins, and feels smart enough to beat casual players. Then you run into the next ceiling. Search alone starts getting expensive, and stronger play depends on evaluating messy midgame positions better than a hand-written heuristic can.

That is the point where machine learning starts paying for itself.

Supervised learning is the cleanest way to start

For a weekend project, supervised learning is usually the right first ML step. You collect board states, attach labels such as win, loss, draw, or best move, and train a model to score positions faster than a deeper search would.

One useful reference point comes from this , which compared several models on a Connect 4 dataset. The broad takeaway is practical. Simpler linear models can learn some structure, but stronger nonlinear methods tend to do better because Connect 4 has stacked interactions across rows, columns, diagonals, and move order.

That matches what I’d expect in real code. A linear model may be enough for a quick experiment or a cheap evaluator. If you want a model that captures stronger positional patterns, tree ensembles or a small neural network are usually better candidates.

The best use of ML is often inside a hybrid bot

Pure ML can pick moves quickly, but it does not replace tactical search well on its own. Search is still better at proving forced wins, spotting immediate losses, and handling sharp positions where one mistake ends the game.

The practical upgrade is a hybrid bot:

- use minimax or another search method for lookahead

- use a trained model to score non-terminal positions

- keep exact checks for wins, losses, and forced blocks outside the model

That setup gives you two things at once. You keep tactical reliability, and you reduce the amount of hand-tuning needed in your evaluation function.

If you are building this with an AI-assisted workflow, an all-in-one platform helps more than people expect. I’d use Zemith to research model options, sketch feature encodings, generate training scripts, and compare implementation paths before committing to one. If you want a broader shortlist of tools for that workflow, this guide to is a good reference.

Reinforcement learning is interesting, but it is rarely the fastest route to a strong bot

Self-play sounds attractive because the bot can learn by playing against itself. The catch is the engineering overhead.

You need to choose a state representation, define rewards, manage exploration, save checkpoints, and watch for unstable training runs. You also need enough compute and enough patience to get through the phase where the bot learns habits that look absurd to a human player.

For a first serious bot, I would not start there. Use supervised learning or a stronger search engine first. Come back to reinforcement learning when the goal is experimentation, not just finishing a solid playable bot.

Solved positions are great training material

A smarter shortcut is to train on positions that already have perfect-play labels. That data is far more useful than noisy human games when you want your evaluator to learn what is correct.

As noted earlier in the article, solved Connect 4 position datasets exist for parts of the game tree. They are valuable because they let you train on authoritative outcomes instead of approximations. For a developer, that changes the workflow. You are no longer guessing whether a position is “pretty good.” You are teaching the model from exact answers where those answers are available.

This is also where Zemith is handy in a very practical sense. You can use it to organize source material, generate preprocessing code, test encodings, and document the trade-offs between perfect labels and broader but noisier datasets in one place instead of bouncing across five tools.

Advanced tactics that still matter without ML

Machine learning is not the only way to improve the bot. A few search upgrades still produce large gains.

Iterative deepening

Iterative deepening solves a real engineering problem. Fixed-depth search looks fine until a position takes longer than expected and your bot misses its response deadline.

Search depth 1, then 2, then 3, and keep the best move from the deepest completed pass. That way the bot always has a usable answer, even under a time limit.

Better move ordering

Move ordering is one of the highest-return optimizations in this project. Search strong candidates first, especially center columns, immediate wins, and urgent defensive moves. Better ordering means alpha-beta pruning cuts more branches, which means you reach more depth with the same budget.

A short demo can help when you want to compare styles of bot logic in action:

Perfect solvers

Perfect solver work is a different class of project. The goal is no longer “play well enough.” The goal is exact play, low latency, and predictable performance under real constraints.

That brings real trade-offs. Exact search can increase memory pressure. Precomputed tables can speed up responses but complicate deployment. Aggressive optimization makes the engine faster, but it also makes the code harder to maintain. Those are worthwhile problems if you want a production-grade service. They are overkill if you are still validating the core bot.

A strong weekend bot uses search plus a solid heuristic. A stronger long-term bot uses search, learned evaluation, and solved data where it makes sense.

From Code to Cloud Deploying Your Bot as a Service

A connect 4 bot is more fun when other people can hit an endpoint and challenge it.

A script on your laptop proves the idea. A deployed service proves the engineering.

Wrap the bot in a small API

Use FastAPI if you want the shortest path from Python function to usable service.

Your core endpoint can accept a board state and return:

- the chosen column

- whether the state is terminal

- an optional explanation or score for debugging

Keep the request payload boring. A nested array or compact board encoding is enough. Fancy schemas can wait until the bot is stable.

Latency changes the design

Local experiments let you think forever. Web users do not.

That is why deployment exposes trade-offs you can ignore during development. Deep search may be fine on your machine but annoying over an API. Move ordering suddenly matters more. Time-limited search becomes useful. Caching repeated board states can help if users replay common openings.

There’s also a known gap here. Many tutorials explain minimax but stop short of the practical work involved in exposing a fast solver in a web setting. Developers often run into pruning efficiency and move ordering issues during deployment, which is one reason implementation help around APIs and containers is valuable, as discussed in this .

Containerize it early

A Dockerfile saves you from the classic “works on my machine” comedy routine.

Your container only needs a few basics:

- Install dependencies.

- Copy the app code.

- Expose the API port.

- Start the FastAPI server.

That gives you a consistent package for local testing and cloud deployment. It also makes it easier to move between hosting platforms without rewriting setup every time.

Add the minimum operational guardrails

Your first deployed bot does not need enterprise infrastructure. It does need a few adult decisions:

- Request validation: Reject impossible board states.

- Timeouts: Don’t let one search hang forever.

- Logging: Record inputs, chosen moves, and errors.

- Versioning: Keep your API contract stable if you change the payload later.

If you want a broader deployment perspective beyond games, this article on is a solid companion read because many of the same concerns show up once your model or decision system leaves your laptop.

Hosting choices for a weekend build

For a side project, keep hosting simple.

A practical deployment mindset matters as much as the bot itself. This guide on shipping software cleanly is worth bookmarking:

The true win is not “my bot is online.” It’s “my bot is online, responds consistently, and doesn’t melt when three friends spam the endpoint at once.”

The Final Boss Your Deployed Connect 4 Bot

A finished connect 4 bot is a sneaky good milestone.

You started with a toy problem and ended up practicing the same habits that show up in larger systems. You modeled state carefully. You separated rules from decision logic. You optimized when brute force failed. You made trade-offs once latency and deployment entered the room.

That’s real engineering.

The nice part is how transferable the workflow is. Today it’s Connect 4. Tomorrow it could be Tic-Tac-Toe for a beginner tutorial, a chess tactics trainer, a puzzle solver, or a scheduling assistant that uses the same pattern of state, actions, scoring, and constraints.

A lot of developers get stuck because they think serious AI work starts with giant datasets and complicated infrastructure. It doesn’t have to. A small project with clear rules teaches discipline faster than a flashy project with fuzzy goals.

If your bot is deployed and making solid moves, you already did the hard part. Everything after that is iteration.

Make the difficulty adjustable. Add a front end. Let two bots play each other. Store game histories. Add explanations for why the bot chose a move. That last one is especially fun, because users love a strong bot but they really love one that politely explains their mistake. Brutal, but polite.

Frequently Asked Questions About Connect 4 Bots

What language should I use for a connect 4 bot

Use the language you can finish in.

Python is the easiest starting point for most developers because the syntax is friendly and libraries are abundant. It’s also perfectly reasonable for a strong minimax bot if your implementation is careful.

If raw speed becomes the priority, you can always port the hot path later.

Should I start with minimax or machine learning

Start with minimax plus alpha-beta pruning.

That route teaches the game properly and gives you a bot that behaves predictably. Machine learning is more interesting once you already have a baseline and want to compare learned evaluation against hand-built heuristics.

How do I make the bot easier or harder

The simplest knob is search depth.

A shallower bot feels more human and makes mistakes. A deeper bot becomes tougher. You can also tweak the heuristic weights so the bot plays more aggressively or more defensively, but depth is usually the cleanest difficulty setting.

Why is my bot still slow

Usually one of these is the problem:

- Board operations are expensive: Too much copying or awkward scanning.

- Move ordering is weak: The bot explores bad branches first.

- No pruning: Plain minimax gets expensive quickly.

- Debug code is still running: Verbose logging can slow search loops.

Profile before guessing. Developers love guessing. Profilers love proving them wrong.

Do I need a perfect solver

No. Not for a fun and strong weekend project.

A perfect solver is great if your goal is exact play, research, or a polished production challenge mode. For most developers, a strong heuristic search bot already teaches the right lessons and feels impressive in play.

How should I test the bot

Mix unit tests and play tests.

Unit tests should verify move legality, gravity, and win detection. Play tests should include obvious tactical boards like immediate wins, forced blocks, and trap setups. If the bot misses a one-move win, stop adding features and fix that first.

Can I expose the bot through a web app

Yes, and you should if you want the project to feel complete.

A tiny API plus a basic front end is enough. Even a minimal interface where a user clicks a column and gets the bot response back makes the project much more shareable.

What makes this a good portfolio project

It shows more than coding syntax.

You can demonstrate algorithm design, optimization, debugging, architecture, and deployment in one compact repo. That’s much stronger than a generic CRUD app clone, and much easier to explain in an interview.

If you want one workspace for research, writing, coding, debugging, and shipping projects like this without juggling a pile of separate tools, take a look at . It’s a practical fit for developers who want to move from idea to working software faster, especially on projects where you need code help, notes, and AI-assisted iteration in the same place.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

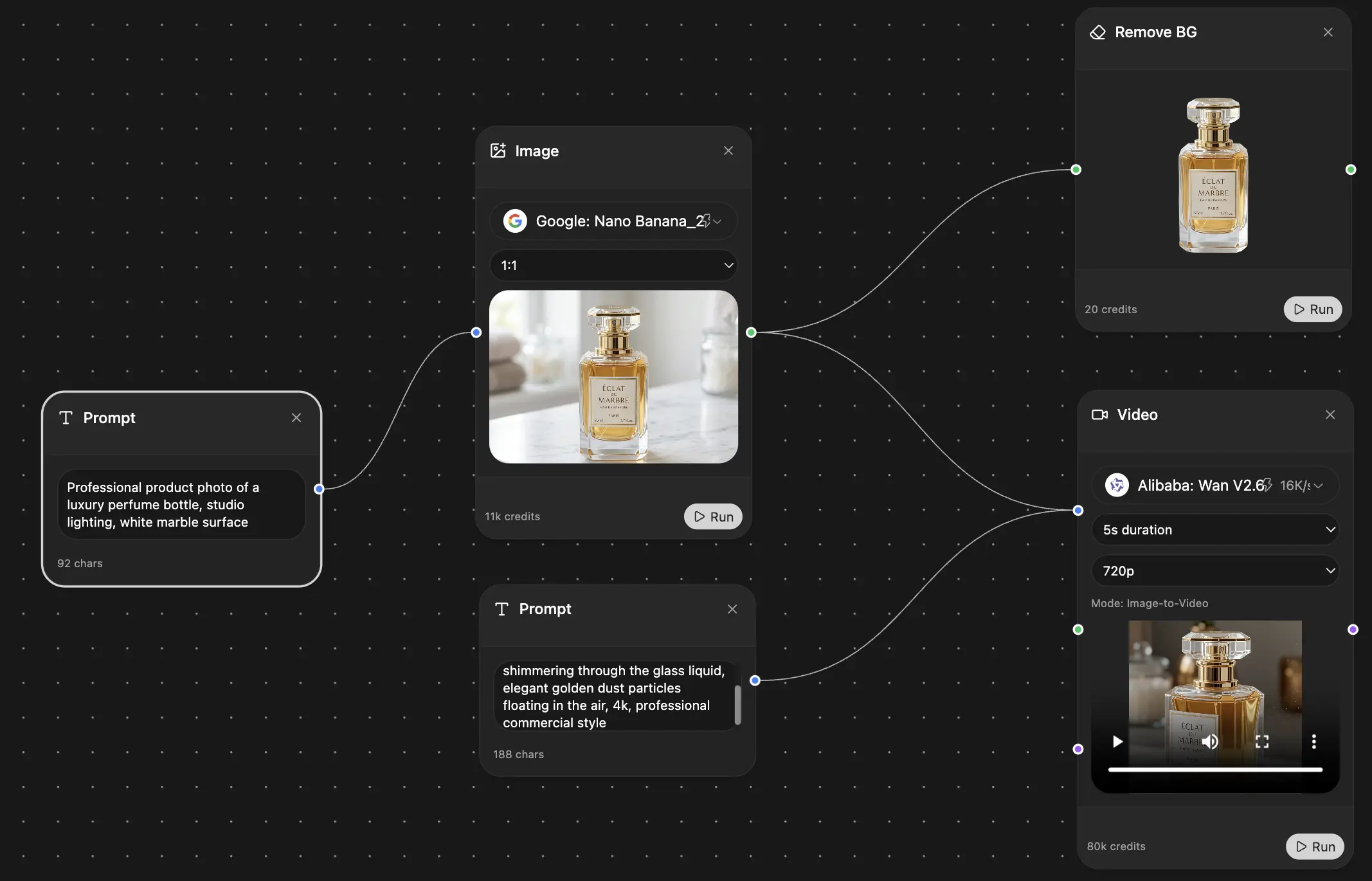

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691